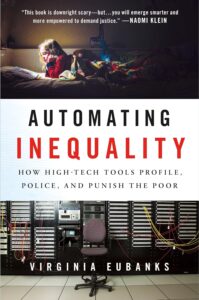

What We’re Learning: “Automating Inequality: How High-Tech Tools Profile, Police, and Punish The Poor” by Virginia Eubanks

For this edition of What We’re Learning, I chose Automating Inequality: How High-Tech Tools Profile, Police,

and Punish The Poor by Virginia Eubanks, a political science professor at the University of Albany, SUNY, founding member of the Our Data Bodies Project, and fellow at New America.

The dawn of the information age in the mid-twentieth century has resulted in profound shifts in how we make decisions for ourselves and others. In nearly every aspect of life, our choices are now shaped by data mining, policy algorithms, and predictive risk models.

In Automating Inequality: How High-Tech Tools Profile, Police, and Punish The Poor, Eubanks exposes how the fields of health care and human services have become increasingly automated, and how information technology systems have largely replaced the human-centered caseworker model in determining who receives support. Eubanks demonstrates that, despite promises to reduce errors, prevent fraud, and distribute resources more efficiently, many of these systems are in fact deeply biased, invasive, and punitive, causing significant harm to the nation’s most vulnerable communities.

The origins of efforts to automate the distribution of government aid were not born out of a desire to help more of the nation’s poor, but rather by a wave of political anxiety that arose in the 1970s under the administration of Ronald Reagan about rising welfare costs, fraud, and inefficiency. In reality, fraud represents only a small fraction of payments, yet Reagan’s image of the “welfare queen” took hold and persists to this day. Politicians began commissioning expansive modern technologies that promised to save money by distributing aid more efficiently. Computers were marketed as neutral tools for shrinking public spending and fraud, but in reality, these systems were built to increase scrutiny and surveillance of welfare recipients. These technologies were not designed to help people, but to play “gotcha.” This logic reflects a broader philosophy: that it is preferable to deny benefits to many eligible applicants than to risk one ineligible person receiving support. As a result, these systems have been designed (and continue to be designed) to prioritize denial over access.

Eubanks argues that this is because, “collectively, we care less about the actual suffering of those living in poverty and more about the potential threat they might pose to others.” Our new systems require poor people to submit vast amounts of personal data and scrutinize that data for reasons to deny benefits. Those who are not deterred from accessing public resources out of desire to keep their labor, spending, sexuality, or parenting private, often find that their data is used to punish and criminalize them.

This unchecked surveillance and experimentation on the poor represents a new form of discrimination. Those with means would never consent to government aid being dependent on the data collection and types of analysis these systems perform (and make no mistake – the government spends billions every year providing aid to middle and upper classes families in the form of tax credits, deductions and below-market rate loans). It is only the poor who have no choice but to comply.

Middle and upper class families regularly access support and intervention as well – but data generated in those relationships – through nannies, babysitters, private tutors, therapists, doctors, and rehabilitation centers – is not collected and used to police or punish families by denying access to government aid. Imagine if a middle-class family’s ability to claim a mortgage credit on their tax return were affected by whether they or a family member had ever needed therapy. Ultimately, this is not neutrality, but a profound imbalance in whose dignity is protected and whose is put at risk.

The stories Eubanks shares of individuals denied basic benefits are difficult to forget, as is the unsettling realization that the ability to mask injustice through “neutral” technological systems will ultimately harm all but the wealthiest among us. Eubanks demonstrates very clearly that these systems are not neutral. Furthermore, “when automated decision-making tools are not built to explicitly dismantle structural inequities, their speed and scale intensify them.”

The insidiousness of these programs, combined with our misplaced faith in technology as an unbiased tool, presents a real danger to everyone except the very wealthy. Data shows that poverty is not a unique experience in America, nor is there a “culture of poverty”. As income inequality continues to grow, so will the number of middle-class citizens in need of government aid to live dignified lives.

If we continue to criminalize access to aid, or worse, use data to predict and penalize future need, we are not solving poverty; we are systematizing it. These tools do not eliminate risk or inefficiency. Instead, they redistribute it onto those least able to absorb it, normalizing a system that treats vulnerability as a liability rather than a shared human condition. In doing so, we move further away from any meaningful commitment to dignity or care. What emerges is not a more rational system, but a more punitive one, where inequality is not only preserved but accelerated at scale. The result is not efficiency, but the steady expansion of inequality and a society increasingly willing to accept that outcome. For those shaping policy, funding, and systems, the opportunity is clear: to ensure that the tools we build expand access and dignity, rather than restrict them.